Understanding modern water testing methods is essential for anyone who wants reliable information about drinking water quality. Households, schools, landlords, rural well owners, facility managers, and public health professionals all face the same basic question: how do you know what is actually in the water? The answer depends on the contaminant of concern, the level of accuracy required, the urgency of the situation, and the tools available. Today, water can be evaluated through laboratory analysis, home test kits, and increasingly through connected digital devices and smart water sensors. Each approach has strengths, limitations, and appropriate use cases. This article explains how these water quality methods work, what they can and cannot tell you, and how to choose the right strategy for protecting drinking water safety.

Drinking water may contain a wide range of physical, chemical, and biological hazards. Some are naturally occurring, such as arsenic, manganese, hardness minerals, or radionuclides in groundwater. Others result from infrastructure, agriculture, industrial activity, treatment failures, or household plumbing. Lead can leach from service lines and fixtures. Nitrate can enter wells from fertilizer or septic systems. Bacteria and viruses may indicate fecal contamination. Disinfection byproducts can form during treatment. Even when water looks clear and tastes acceptable, harmful contaminants may still be present at concentrations that matter for health. That is why testing matters.

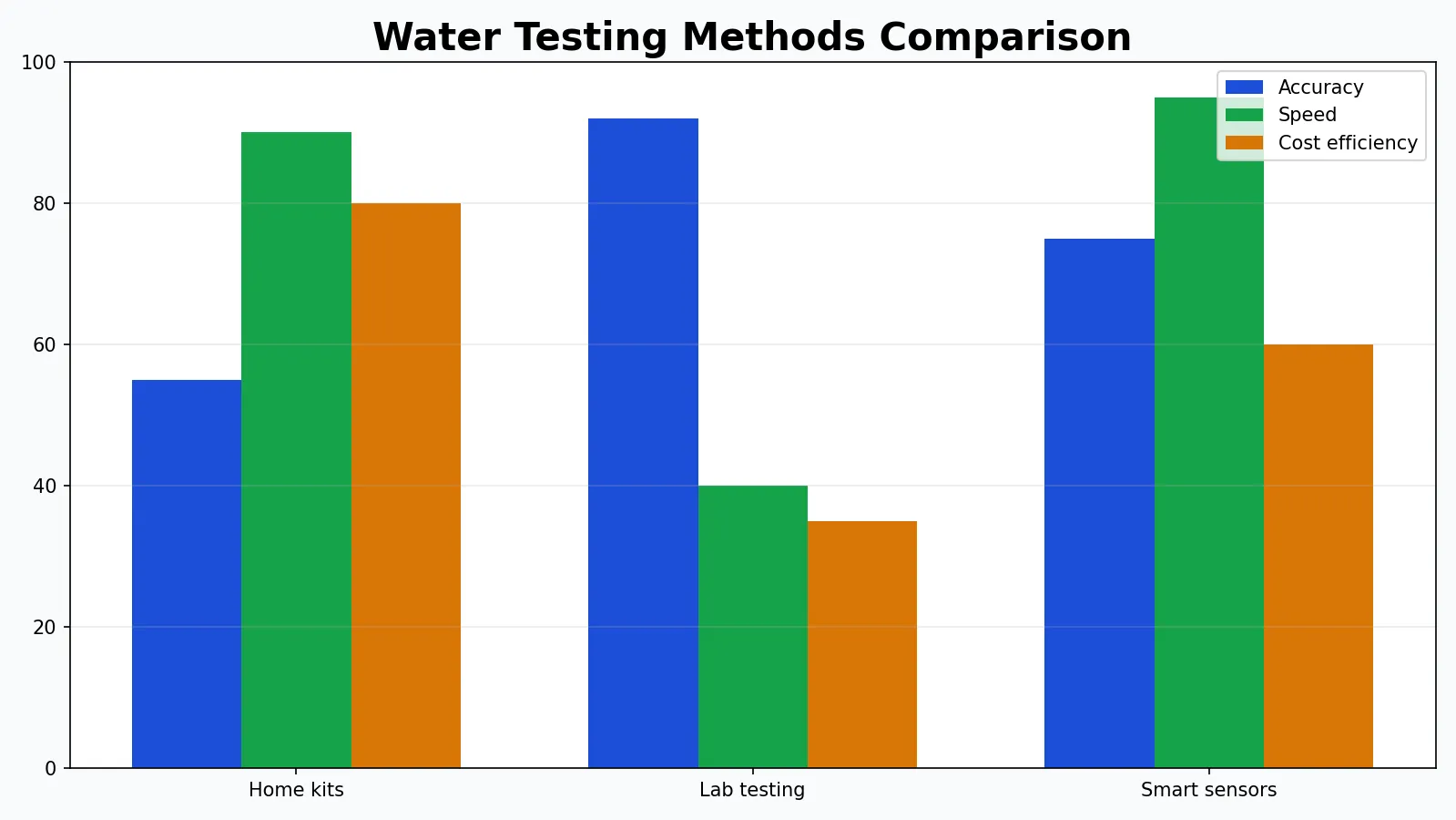

At the same time, not all testing approaches are equivalent. A dip strip that estimates pH or hardness is not the same as a certified laboratory measurement of lead at parts-per-billion levels. A continuous conductivity probe can help flag a change in water chemistry, but it may not identify the exact contaminant responsible. A microbiological screen may indicate the need for immediate action, while a full analytical panel may be necessary to confirm compliance, diagnose a source problem, or design treatment. In practice, home vs lab testing is not an either-or debate. The best strategy is often layered: screening at home, confirmation in a lab, and monitoring over time with digital tools where appropriate.

For readers who want a broader overview of sampling and analysis, PureWaterAtlas also provides a complete guide to drinking water testing and analysis that complements the more method-focused discussion below.

Why water testing matters for drinking water safety

Water testing is fundamentally about risk reduction. Safe drinking water supports hydration, child development, pregnancy, immune function, kidney health, and general public health. Unsafe drinking water can contribute to acute gastrointestinal illness, long-term exposure to toxic metals, developmental harm, and other adverse outcomes depending on the contaminant, dose, and duration of exposure. The World Health Organization emphasizes that contaminated water remains a major global health concern, especially where sanitation, treatment, and source protection are inconsistent.

In the United States, public water systems are regulated under the Safe Drinking Water Act, and the U.S. Environmental Protection Agency establishes standards and treatment techniques for many contaminants. But regulation does not mean every water source is risk-free, and it does not cover all situations equally. Private wells, for example, are generally the responsibility of the owner. Even in regulated systems, contamination can arise from premise plumbing, localized distribution problems, seasonal source changes, or unusual events such as flooding, fires, or line breaks. The CDC’s healthy drinking water resources also stress that water quality concerns can differ by source and setting.

Testing also matters because water problems are not always obvious to the senses. Taste, odor, and color can provide clues, but they are not reliable safety indicators. Iron may stain sinks and laundry without posing the same type of health risk associated with lead, while nitrate or arsenic can be present in water that appears perfectly normal. Cloudiness may suggest suspended particles or microbial risk, but many pathogens are invisible. Odor can indicate sulfur compounds, chlorination, or industrial contamination, but some dangerous chemicals have no clear smell at relevant concentrations.

In practical terms, accurate testing helps answer several critical questions:

- Is the water safe to drink, cook with, and use for infant formula?

- Is there a need for urgent action, such as boiling water, using bottled water, or avoiding consumption?

- Are contaminants coming from the source water, treatment failure, or building plumbing?

- What type of filtration or treatment system is appropriate?

- Has water quality changed over time?

- Is a problem isolated or persistent?

The scientific foundation of water testing methods

To compare testing approaches fairly, it helps to understand the basic scientific categories used in water analysis. Most water testing methods target one or more of the following domains: physical parameters, chemical constituents, microbiological indicators, and operational or treatment-related metrics.

Physical parameters

Physical measurements describe how water looks or behaves. These include temperature, turbidity, color, conductivity, and total dissolved solids. They do not necessarily identify specific contaminants, but they can indicate a change in source conditions, treatment performance, or contamination potential.

- Turbidity measures cloudiness caused by suspended particles. Elevated turbidity can interfere with disinfection and may signal runoff, sediment disturbance, or treatment issues.

- Conductivity reflects the water’s ability to carry electrical current, which generally rises as dissolved ions increase. It is often used as a proxy for salinity or overall mineral content.

- Total dissolved solids (TDS) estimates the amount of dissolved substances in water, though it does not identify which substances are present.

- Temperature influences microbial growth, disinfection performance, corrosion, and dissolved oxygen behavior.

Chemical parameters

Chemical testing can target inorganic contaminants such as lead, arsenic, fluoride, nitrate, copper, iron, manganese, calcium, magnesium, and chloride, as well as organic compounds such as pesticides, solvents, petroleum components, and certain industrial chemicals. Some tests are highly specific and quantitative, while others are broad screens.

Many chemical contaminants are measured in milligrams per liter (mg/L), roughly equivalent to parts per million for dilute aqueous systems, or in micrograms per liter (µg/L), equivalent to parts per billion. Interpreting these values requires context. A concentration that is trivial for sodium may be serious for lead. Method sensitivity, calibration, matrix effects, and sample preservation all influence the final reported number.

Microbiological parameters

Microbial testing addresses bacteria, and in more specialized contexts, viruses and protozoa. Most routine drinking water microbiology does not test directly for every pathogen. Instead, it often uses indicator organisms such as total coliforms and Escherichia coli to infer contamination risk. The presence of E. coli is particularly concerning because it suggests fecal contamination and a possible pathway for disease-causing microorganisms.

Microbiology is a major reason that water testing accuracy depends not only on the analytical technology but also on proper sample collection. A contaminated bottle, dirty faucet aerator, or poorly timed sample can create misleading results.

Operational and treatment-related metrics

Some measurements help assess how water treatment or plumbing conditions are functioning rather than identifying a pollutant directly. Examples include free chlorine residual, oxidation-reduction potential, pH, alkalinity, hardness, and corrosion indicators. These values can influence whether metals leach from plumbing, whether disinfection remains effective, and whether treatment systems are optimized.

The three major categories: lab, home, and smart sensor approaches

When people compare water testing methods, they are usually deciding among three broad approaches:

- Laboratory testing: samples are collected and sent to a professional analytical laboratory.

- Home testing: the user performs a test on-site with strips, reagents, handheld meters, or simple kits.

- Smart sensor monitoring: digital devices continuously or semi-continuously track selected parameters and often transmit data automatically.

These approaches are not interchangeable. They answer different questions and operate at different levels of specificity, precision, and cost. The rest of this article examines each one in detail.

Lab water testing: what it is and why it remains the reference standard

Lab water testing involves collecting a sample according to defined procedures and submitting it to a laboratory that uses validated analytical techniques. In many scenarios, this is the most reliable path when you need defensible results, low detection limits, or broad contaminant coverage. It is especially important for contaminants that matter at very low concentrations, including lead, arsenic, volatile organic compounds, PFAS in specialized programs, and many regulated microbiological tests.

How laboratory analysis works

The laboratory process usually includes several steps:

- Selection of the analytes or test panel.

- Use of the correct sample container, preservative, and holding time.

- Documented sample collection, often with instructions for first-draw, flushed, or raw source samples depending on the question.

- Transport under the required conditions, such as cooling for some microbiological or chemical analyses.

- Instrumental analysis using methods appropriate to the target contaminant.

- Quality control procedures such as blanks, standards, duplicate analysis, and calibration verification.

- Reporting of results with units, detection limits, and often method references.

Common laboratory techniques include inductively coupled plasma mass spectrometry for trace metals, ion chromatography for anions such as nitrate, gas chromatography for volatile organics, liquid chromatography for certain organic contaminants, membrane filtration or presence-absence methods for microbes, and spectrophotometric methods for selected chemical parameters.

Strengths of lab water testing

- High sensitivity: laboratories can often detect contaminants at much lower concentrations than home tests.

- Better specificity: they can identify exactly which metal, compound, or microorganism is present.

- Quantitative results: concentrations are reported numerically, allowing comparison with standards or treatment goals.

- Broad analytical coverage: multi-parameter panels can assess a wide range of contaminants.

- Quality assurance: established methods and controls improve confidence in the result.

- Regulatory relevance: laboratory data are generally necessary for compliance, official investigation, or professional treatment design.

Limitations of laboratory testing

- Higher cost than simple home kits.

- Longer turnaround time, especially for specialized contaminants.

- Sampling errors can still occur, particularly if bottles, timing, or preservation are incorrect.

- Snapshot nature: one sample may not reflect daily or seasonal variation.

- Requires planning: choosing the right panel is not always straightforward.

When lab testing is most appropriate

Laboratory analysis is the best option when you are dealing with a private well, buying a home, investigating plumbing-related metals, assessing contamination after flooding, checking for regulated contaminants, evaluating treatment performance, or responding to persistent health or aesthetic concerns that screening tests cannot explain. It is also the preferred route when the consequences of error are high.

If you are weighing the tradeoffs in more practical terms, PureWaterAtlas has a separate discussion of DIY vs professional water testing that helps frame when confirmation testing is worth the added cost.

Home water testing: accessible screening with important limitations

Home testing kits are popular because they are fast, convenient, and comparatively inexpensive. They can be useful tools for routine screening, troubleshooting obvious changes, and helping households decide whether more advanced testing is warranted. However, the term “home test” covers a wide range of technologies, from basic color strips to digital handheld meters and reagent kits with comparator charts.

Common home testing formats

- Test strips: paper or plastic strips impregnated with reagents that change color when exposed to water.

- Drop-count or vial reagent kits: liquid or powder reagents are added to a water sample, producing a color change that is compared to a scale.

- Handheld digital meters: electronic devices measure parameters such as pH, conductivity, TDS, or sometimes free chlorine.

- Presence-absence microbiological kits: simple at-home incubation methods that screen for coliform bacteria.

Parameters commonly tested at home

Home kits often measure pH, hardness, alkalinity, chlorine, iron, copper, nitrate, nitrite, manganese, TDS, and occasionally lead. Some microbiology kits screen for bacteria, though the quality and interpretability of these kits vary significantly. For a step-by-step overview of practical self-screening, see this guide on how to test drinking water at home.

Strengths of home testing

- Speed: some results are available in minutes.

- Convenience: no shipping, scheduling, or lab coordination is required.

- Affordability: useful for preliminary checks or repeated screening.

- User empowerment: households can investigate a concern quickly and monitor simple treatment changes.

- Good for routine parameters: pH, hardness, chlorine, and conductivity are often well suited to on-site measurement.

Limitations of home testing

- Lower precision than professional analytical methods.

- Limited contaminant scope: many harmful compounds are not covered.

- Subjective interpretation: color matching can vary by lighting, user vision, and timing.

- Potential interferences: colored water, oxidants, mineral content, or reagent age can distort results.

- Variable sensitivity: some kits cannot detect contaminants at the concentrations that matter most for health decisions.

Water testing accuracy in home kits

Water testing accuracy is where many misunderstandings occur. A home kit can be accurate enough for one purpose and inadequate for another. For example, a TDS meter may consistently estimate dissolved ion concentration well enough to track reverse osmosis performance, but it cannot tell whether the dissolved solids are harmless calcium or problematic nitrate. A pH strip may indicate that water is mildly acidic, but it will not characterize corrosion risk with the same confidence as a more complete water chemistry profile. A simple lead screen may be unable to quantify low but still important concentrations with the certainty needed for decisions involving infants or pregnant households.

That does not make home tests useless. It means the result must be matched to the decision. Screening is not confirmation. Trend monitoring is not compound-specific diagnosis. Estimation is not the same as compliance-grade quantification.

Smart water sensors: real-time monitoring and the rise of connected water data

Smart water sensors are one of the most important developments in modern water quality methods. These devices continuously or repeatedly measure selected parameters and often transmit readings through Wi-Fi, Bluetooth, cellular networks, or building management systems. Their greatest advantage is not necessarily that they replace laboratory testing, but that they make water quality changes visible over time.

What smart sensors usually measure

Smart or digital systems commonly monitor:

- pH

- conductivity

- TDS

- temperature

- oxidation-reduction potential

- free chlorine or other disinfectant residuals in some systems

- turbidity in more advanced installations

- pressure and flow, which are not quality parameters themselves but can help identify events affecting water quality

Some specialized platforms can also infer anomalies through combinations of sensor data, alerting users to sudden source changes, treatment breakthrough, or equipment failure. If you want a closer look at tools in this category, PureWaterAtlas covers digital water testing devices and their practical applications.

Strengths of smart sensor systems

- Continuous visibility: unlike one-time sampling, sensors can show trends, spikes, and sudden shifts.

- Rapid alerts: useful for operational response when water quality changes unexpectedly.

- Remote access: data can often be reviewed from a phone or dashboard.

- Operational optimization: helpful for treatment systems, building water management, and maintenance scheduling.

- Supports preventive action: users can act before a small problem becomes a larger one.

Limitations of smart water sensors

- Usually indirect: many sensors measure proxies rather than specific contaminants.

- Calibration drift: sensors require maintenance and recalibration.

- Biofouling and scaling: deposits or microbial growth can affect readings over time.

- Not universal: many important contaminants still require lab confirmation.

- Data interpretation challenges: more data do not automatically mean clearer answers.

Where smart sensors fit best

Smart monitoring works best when conditions can change quickly or when trend data matter. Examples include private water systems with treatment equipment, schools and commercial buildings trying to manage stagnation or disinfectant residuals, point-of-entry systems that need performance tracking, and rural properties with variable source water. For more on this monitoring model, see PureWaterAtlas on real-time water monitoring.

Home vs lab testing: how to decide which one you need

The phrase home vs lab testing can be misleading because the right answer often depends on the specific parameter and the specific decision. Instead of asking which is universally better, it is more useful to ask which method is fit for purpose.

| Question | Home Testing | Lab Water Testing | Smart Sensors |

|---|---|---|---|

| Need quick screening today? | Strong option | Usually slower | Strong if already installed |

| Need precise contaminant concentration? | Limited | Best option | Usually limited |

| Need to detect lead, arsenic, or VOCs reliably? | Often inadequate | Best option | Generally not suitable alone |

| Need ongoing trend monitoring? | Manual repeat testing required | Possible but expensive | Best option |

| Need regulatory or professional documentation? | Usually not sufficient | Best option | Supportive, not usually sufficient alone |

| Need low-cost routine checks for pH or hardness? | Good option | Possible but not always necessary | Good for ongoing monitoring |

In general:

- Use home tests for quick screening, routine operational checks, and simple parameters.

- Use lab tests for safety-critical contaminants, regulatory confidence, troubleshooting, and treatment design.

- Use smart sensors for tracking change over time, detecting anomalies, and supporting ongoing system management.

Contaminant-specific examples: which method works best?

Lead

Lead is a classic example of why method selection matters. It often enters water from plumbing materials rather than the source itself, so sampling design matters as much as analytical technology. Lead can fluctuate depending on stagnation time, water chemistry, and recent fixture use. Home lead kits may provide a rough screen, but lab water testing is typically the appropriate method for accurate low-level assessment. First-draw and flushed samples may be used for different diagnostic purposes.

Nitrate

Nitrate is especially important for private wells in agricultural or septic-impacted areas. Some home kits can screen for nitrate reasonably well, but confirmation in a lab is advisable when elevated results are detected, when infants are in the home, or when concentrations may approach health-based thresholds. Seasonal variation can also matter.

Hardness and alkalinity

These are often well suited to home testing and digital meters. While laboratory analysis provides more detail, many households can make useful treatment decisions based on reliable field measurements, especially when selecting or adjusting softening systems.

pH and conductivity

These parameters are frequently measured on site because they can change during sample transport and because digital probes can provide immediate feedback. That said, poor calibration can undermine accuracy, so meter maintenance matters.

Coliform bacteria

Microbiological screening at home may help flag a problem, but sample contamination is common if technique is poor. Confirmatory or primary microbiological analysis is generally better performed through established laboratory methods, especially when a well, cistern, or vulnerable distribution setup is involved.

Volatile organic compounds and pesticides

These are not practical targets for ordinary home tests in most cases. Laboratory methods are required because the compounds are chemically diverse, often present at low concentrations, and sensitive to sampling and handling conditions.

How results should be interpreted

A water test result is only useful if it is interpreted correctly. The number alone does not provide the full story. Results must be evaluated in relation to health-based standards, aesthetic guidelines, plumbing effects, treatment goals, and the conditions under which the sample was taken.

Health-based standards versus aesthetic concerns

Some water quality issues mainly affect taste, appearance, or appliance performance. Hardness, iron, manganese, and sulfur odors can be highly noticeable and frustrating, but their significance differs from contaminants with stronger direct health implications. Other contaminants, such as lead, nitrate, arsenic, or microbial indicators, often require more urgent interpretation. The fact that water smells or stains does not automatically mean it is unsafe, and the absence of these signs does not mean it is safe.

Detection limits and non-detect results

A “non-detect” does not always mean absolute absence. It means the contaminant was not detected above the method’s reporting limit or detection limit under the test conditions. A more sensitive method might detect smaller amounts. This matters when comparing home screens to professional methods, especially for contaminants that are concerning at low concentrations.

Single samples versus patterns

Water chemistry can change with season, rainfall, pumping, plumbing stagnation, treatment maintenance, and distribution conditions. One result may be enough to identify a major problem, but repeat testing often improves confidence. Smart monitoring can be particularly helpful here because trends may reveal patterns that single samples miss.

Source versus plumbing contributions

If a contaminant is found, the next question is where it came from. A well may contribute arsenic or nitrate. Household plumbing may contribute copper or lead. Building stagnation may reduce disinfectant residual. Water testing plans should be designed to answer this question where possible, using sample location and timing strategically.

Sampling quality: the hidden factor that affects all methods

Even the best technology cannot compensate for poor sampling. Across all water testing methods, sample quality is often the hidden variable that determines whether the result is useful.

Common sampling mistakes

- Using the wrong container

- Touching the inside of a sterile bottle or cap

- Failing to follow first-draw or flushed instructions

- Letting water sit too long before analysis

- Improper storage temperature

- Collecting from a faucet with a dirty aerator for microbiology

- Testing immediately after changing filters without understanding stabilization time

These errors can create false positives, false negatives, or results that simply do not answer the intended question. For households, this is one of the strongest arguments for clear written instructions and, when necessary, professional sampling support.

Regulatory context and thresholds

In drinking water science, standards and thresholds vary by contaminant and jurisdiction. In the United States, public water system standards are largely set by EPA through Maximum Contaminant Levels, treatment techniques, and related requirements. Private well owners are not usually regulated the same way, but the same health-based reference values are still relevant for risk assessment. The U.S. Geological Survey water resources program also provides valuable context on groundwater, source conditions, and national water-quality patterns.

It is important to distinguish among:

- Health-based standards: values intended to reduce risk from long-term or short-term exposure.

- Aesthetic recommendations: values related to taste, odor, color, or staining.

- Operational targets: values used to manage treatment or corrosion control.

- Method limits: what a specific test can reliably detect or quantify.

This distinction is central to interpreting water testing accuracy. A method may be technically accurate within its range and still be the wrong choice if its detection limit is higher than the threshold you care about.

Using test results to choose treatment

Testing should lead to action when appropriate, but treatment should be matched to the actual problem. Installing filtration without a sound diagnosis can waste money and leave the true risk unresolved. Activated carbon, reverse osmosis, ion exchange, oxidation-filtration systems, ultraviolet disinfection, chlorination, and softening all solve different problems.

This is one reason thorough water quality methods matter: the right data support the right intervention. If test results point to a need for treatment, PureWaterAtlas also explains how to choose the right water treatment system based on contaminant type and household goals.

A practical framework for choosing among water testing methods

If you are unsure which path to take, this decision framework can help:

Choose home testing first when

- You want a quick check of pH, hardness, chlorine, or TDS.

- You are comparing water before and after a filter change.

- You have noticed taste, odor, or scale issues and want a low-cost screen.

- You understand that abnormal results may need laboratory confirmation.

Choose lab testing first when

- You use a private well or another unregulated source.

- You are concerned about lead, arsenic, nitrate, bacteria, VOCs, or other high-concern contaminants.

- You are buying or selling a property.

- You need treatment design data or reliable documentation.

- You have vulnerable household members and low tolerance for uncertainty.

Choose smart monitoring when

- You already have treatment equipment that needs performance tracking.

- You want alerts for sudden changes in water quality indicators.

- You manage a building or water system where conditions vary over time.

- You understand that many sensors monitor proxies, not every hazardous contaminant.

Frequently asked questions

Are home water tests accurate enough to trust?

They can be useful for screening and routine parameters such as pH, hardness, chlorine, or TDS, but they are not always sufficient for low-level toxic contaminants or regulatory decisions. Their reliability depends on the parameter, the kit design, the user’s technique, and the detection range.

Is lab water testing always better?

For specificity, sensitivity, and formal confidence, laboratory analysis is usually the stronger option. But “better” depends on the question. If you need immediate pH or conductivity data, a well-maintained field meter may be more practical. Labs are best when accurate quantification and contaminant identification matter most.

Can smart water sensors detect all contaminants?

No. Most smart sensors track a limited set of physical or chemical indicators. They are excellent for trend monitoring and alerts, but they do not replace laboratory testing for many metals, organics, or microbiological contaminants.

How often should drinking water be tested?

The answer depends on the source, prior results, local risks, plumbing materials, and any change in taste, odor, appearance, or health concern. Private wells generally require periodic testing, and any major event such as flooding, repairs, or source changes should prompt additional evaluation.

What is the biggest mistake people make when comparing home vs lab testing?

The biggest mistake is assuming all tests answer the same question. A screening test may show that something changed, while a lab test may reveal exactly what changed and by how much. Comparing them without considering purpose leads to confusion.

Conclusion

The most effective water testing methods are the ones matched to the contaminant, the level of risk, and the decision you need to make. Lab water testing remains the reference standard for precise, low-level, and broad-spectrum analysis. Home kits provide valuable first-line screening and practical household insight when used within their limits. Smart water sensors add something neither of the other approaches can fully provide: continuous awareness of change over time. Rather than treating these methods as competitors, it is more scientifically accurate to see them as complementary tools in a layered water safety strategy. For drinking water, good testing is not just about collecting numbers. It is about obtaining reliable evidence, interpreting it correctly, and using it to protect health with informed action.

Read the full guide: Water Testing Guide

Explore more in this category: Water Testing Articles