The future water testing landscape is changing rapidly as public health needs, aging infrastructure, climate pressures, and advances in analytical science converge. Drinking water testing is no longer limited to periodic laboratory snapshots taken days or weeks apart. It is evolving into a more continuous, data-rich, and predictive discipline that combines chemistry, microbiology, engineering, data science, and public health surveillance. For households, utilities, regulators, and researchers, this shift matters because water quality can change quickly, contaminants can occur at extremely low concentrations, and some risks arise from complex mixtures rather than a single pollutant measured in isolation. The next generation of water testing technologies aims to make detection faster, more sensitive, more automated, and more useful for real-world decisions.

At its core, future water testing refers to the technologies, methods, and data systems that will define how water quality is measured over the coming years. That includes miniaturized sensors, networked monitoring platforms, machine-learning tools, advanced laboratory instruments, molecular microbiology, cloud-based analytics, and new field-ready devices. Together, these tools are reshaping how contaminants are detected, how results are interpreted, and how contamination events are prevented. If older models of testing were primarily reactive, the new model is increasingly preventive and adaptive.

For anyone following water testing topics, this evolution is not just technical progress. It affects how quickly a boil-water advisory can be triggered, how effectively lead exposure can be reduced, how utilities track disinfection byproducts, and how private well owners respond to changing groundwater conditions. It also changes expectations. Consumers increasingly want near real-time information, not a single annual report. Utilities want early warning systems, not only post-event confirmation. Regulators and scientists want more robust evidence on emerging contaminants, microbial hazards, and system-wide trends.

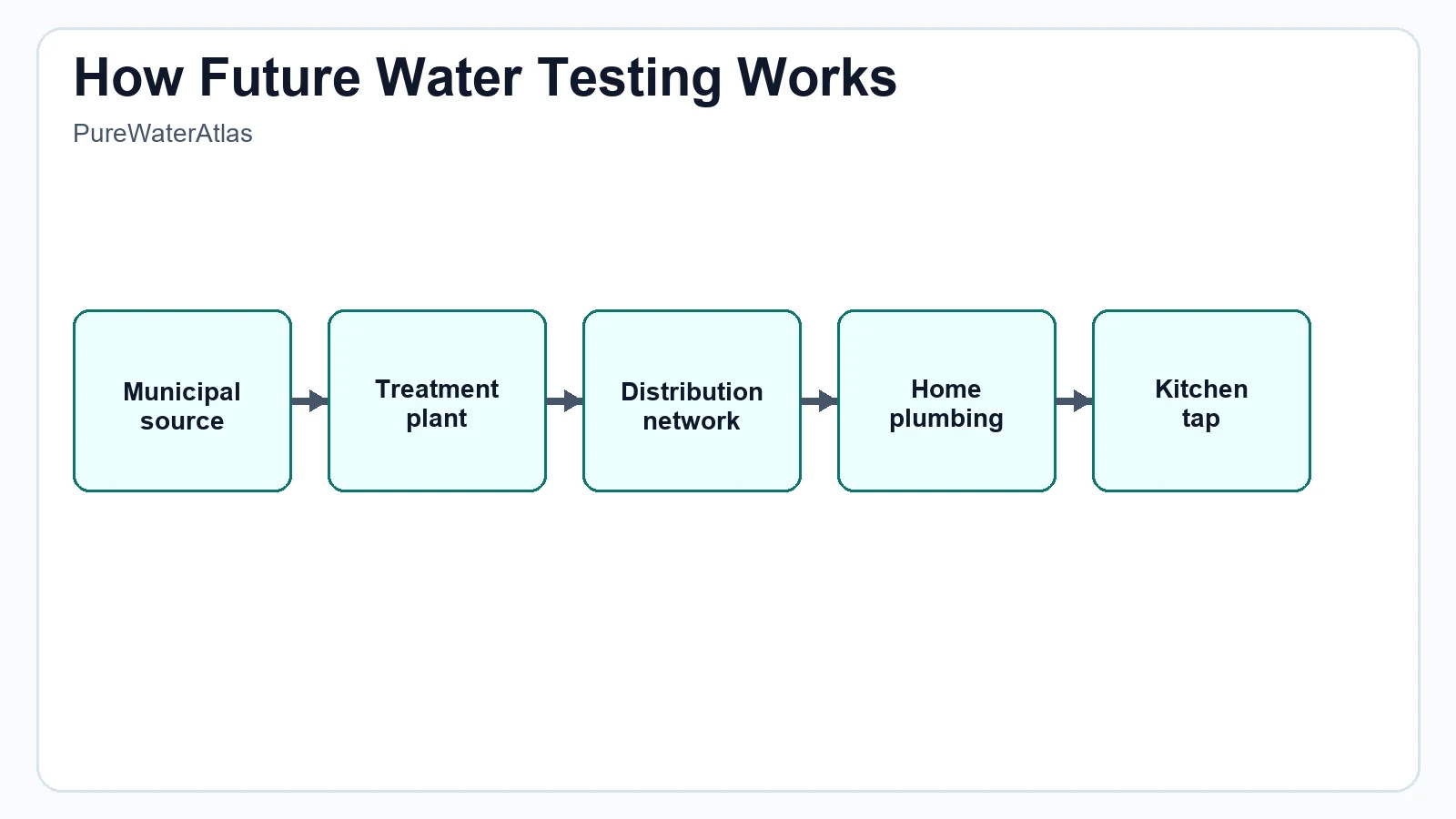

Understanding the future of water testing begins with a scientific reality: drinking water quality is dynamic. Water moves through source waters, treatment plants, storage tanks, distribution systems, premise plumbing, and household fixtures. Along the way, it can pick up metals from corrosion, microorganisms from system breaches, organic chemicals from pollution, nitrates from agricultural runoff, and particulates from disturbance events. A foundational review of water contaminants, treatment, and water quality science helps explain why no single test can capture every possible risk. The future lies in combining multiple methods so that chemical, physical, and microbiological changes can be recognized early and interpreted correctly.

Why the future of water testing matters for drinking water safety

Drinking water safety depends on timely detection, accurate interpretation, and appropriate response. Traditional water testing has done a great deal to protect public health, but it has limitations. Many standard laboratory methods require sample transport, preservation, batch processing, and reporting delays. Some contaminants are episodic, meaning they may appear only briefly after storms, treatment disruptions, or pressure losses. If sampling is infrequent, an event can be missed. Some hazards are also difficult to detect with older methods at the low concentrations now considered important for health protection.

Regulatory and public health organizations have long emphasized the importance of monitoring and risk reduction. The U.S. Environmental Protection Agency drinking water resources outline how standards, treatment techniques, and monitoring requirements support public water safety. The World Health Organization drinking-water fact sheet frames safe water as a core public health necessity, especially because contamination can contribute to infectious disease and chronic exposure risks. The CDC drinking water guidance also highlights the need to understand local risks, especially for private wells and vulnerable populations.

Future-facing testing technologies matter because they help answer practical questions more effectively:

- Is contamination happening right now or did it occur last week?

- Is a change in pH, conductivity, or turbidity a harmless fluctuation or a warning sign?

- Can microbial contamination be flagged before culture results return?

- Can lead release be predicted from corrosion trends rather than discovered only after exposure?

- Can utilities identify contamination hotspots within a distribution system instead of treating the system as uniform?

- Can households and building managers receive clearer guidance about what measured values actually mean?

The future water testing model seeks to improve both speed and context. A result is more useful when it is connected to location, time, water source, treatment status, plumbing conditions, weather patterns, and historical baseline data. This contextual approach is central to water testing evolution and is one reason why digital platforms and networked monitoring are becoming so influential.

How water testing evolved from basic analysis to intelligent monitoring

Water testing began with relatively simple assessments of appearance, taste, odor, and a limited set of chemical indicators. Over time, microbiological culture methods, spectroscopy, chromatography, and electrochemical measurements transformed the field. Regulatory frameworks added structure by defining contaminant limits, action levels, sampling schedules, and reporting requirements. This was a major advance, but the model remained centered on periodic sampling and laboratory confirmation.

Today, water testing evolution is moving through several overlapping stages:

1. From single-parameter testing to multi-parameter profiling

Instead of testing only one or two concerns, modern systems increasingly measure pH, oxidation-reduction potential, conductivity, dissolved oxygen, temperature, turbidity, free chlorine, total chlorine, ultraviolet absorbance, and other indicators together. This creates a fingerprint of system behavior rather than an isolated value.

2. From delayed reporting to near real-time awareness

Utilities and facility managers increasingly rely on networked instruments that send continuous data streams. This shift is especially visible in real-time water monitoring, where online sensors can flag abnormal conditions within minutes rather than days.

3. From manual interpretation to algorithm-assisted decisions

As datasets become larger, human review alone becomes impractical. This is where ai water quality tools enter the field. These systems can learn normal patterns, detect anomalies, prioritize alarms, and support predictive maintenance.

4. From centralized laboratories to distributed testing

Portable instruments, digital readers, and connected field devices are bringing analytical capability closer to the point of use. This supports faster screening in homes, schools, industrial sites, disaster zones, and remote communities.

5. From broad indicators to high-resolution molecular and chemical detection

Emerging methods can identify genetic markers, trace contaminants, and molecular signatures at extremely low levels. These advances are important for pathogens, antibiotic resistance genes, and contaminants of emerging concern.

This is the essence of water tech innovation in the testing sector: better sensing, better integration, and better interpretation.

Scientific foundations behind next-generation water testing

To understand where testing is going, it helps to understand what scientists are trying to measure. Drinking water quality is usually described through four broad categories:

- Physical parameters such as turbidity, temperature, color, and total dissolved solids

- Chemical parameters such as pH, alkalinity, hardness, nitrate, fluoride, chlorine residual, metals, volatile organic compounds, pesticides, and PFAS

- Microbiological parameters such as total coliforms, E. coli, enterococci, Legionella, protozoa, and viral indicators

- Operational indicators such as disinfectant stability, corrosion potential, biofilm activity, and treatment performance markers

Different technologies work best for different targets. There is no universal detector for “safe water.” Instead, the future depends on layered measurement strategies.

Electrochemical sensing

Electrochemical sensors measure how substances interact with electrodes. These methods are often used for pH, conductivity, chlorine, nitrate, and heavy metal detection. They are attractive because they can be miniaturized, made relatively affordable, and integrated into online systems. Future improvements focus on selectivity, lower detection limits, longer calibration stability, reduced fouling, and better performance in complex water matrices.

Optical and spectroscopic methods

Optical systems measure how water absorbs, emits, scatters, or fluoresces light. Ultraviolet-visible absorbance can help identify organic loading, while fluorescence can indicate natural organic matter or microbial activity patterns. Advanced spectroscopic systems may screen for contamination without needing reagent-heavy workflows. These methods are especially promising for rapid trend analysis and non-destructive monitoring.

Chromatography and mass spectrometry

For high-confidence detection of trace organic contaminants, advanced laboratory tools such as liquid chromatography and gas chromatography coupled with mass spectrometry remain essential. These methods can detect pesticides, industrial chemicals, pharmaceutical residues, and PFAS at very low concentrations. The future here involves automation, faster sample preparation, non-target analysis, and improved methods for identifying unknown compounds.

Molecular microbiology

Culture-based microbiology remains important, but molecular tools are rapidly expanding water testing capabilities. Polymerase chain reaction, quantitative PCR, digital PCR, sequencing, and metagenomics can detect pathogens or microbial markers that culture methods may miss or detect too slowly. Molecular tools can also characterize microbial communities, helping researchers understand biofilms, treatment performance, and contamination pathways.

Biosensors

Biosensors use biological recognition elements such as enzymes, antibodies, nucleic acids, or engineered cells to detect specific contaminants. They hold promise for targeted detection of pathogens, toxins, cyanobacterial compounds, endocrine disruptors, or heavy metals. The challenge is translating laboratory prototypes into rugged, field-ready devices with reliable specificity and shelf life.

Smart water sensors and the rise of distributed monitoring

Among the most visible water technology trends is the emergence of smart water sensors. These devices do more than collect a value. They often include onboard calibration routines, wireless communication, geolocation, cloud connectivity, and automated alerting. In many systems, they are installed throughout treatment trains and distribution networks to track changes as water moves from source to tap.

Smart water sensors can measure:

- pH and oxidation-reduction potential

- Conductivity and salinity

- Turbidity and suspended solids

- Free and total chlorine

- Dissolved oxygen

- Temperature

- Pressure and flow conditions

- Specific ions such as nitrate or ammonium

The value of these sensors lies in pattern recognition. For example, a simultaneous drop in disinfectant residual and pressure, combined with a turbidity increase, may indicate intrusion risk in a distribution system. A slow rise in conductivity in a private well might suggest salinity changes, road salt influence, or other groundwater shifts. A sensor network can detect trends that a single lab test cannot.

Modern field instruments are also becoming more user-friendly. Many households and professionals now rely on digital water testing devices for clearer readouts, better repeatability, and easier record keeping than older color-match strips or analog kits alone. While not a replacement for accredited laboratory testing in all situations, these devices are part of the broader shift toward accessible screening and data continuity.

Still, sensor data should be interpreted with caution. Sensor drift, fouling, temperature effects, cross-sensitivity, and matrix interference can all influence readings. Future water testing systems therefore emphasize quality assurance, automated calibration checks, redundant measurements, and confirmation testing when anomalies appear.

AI water quality systems: what they can and cannot do

Ai water quality tools are increasingly discussed as a major force in future water testing, but the term is often used too loosely. In practice, AI in water testing usually refers to machine learning, pattern recognition, anomaly detection, predictive modeling, and decision-support systems built on large operational datasets. These tools do not replace chemistry or microbiology. They sit on top of measurement systems and help interpret complexity.

Useful applications of AI in water testing

- Detecting abnormal patterns in continuous sensor streams

- Predicting equipment failure or calibration drift

- Forecasting contamination risks after storms, wildfires, or flooding

- Estimating unmeasured parameters from correlated variables

- Optimizing sampling schedules by identifying high-risk locations and times

- Classifying events as likely sensor error, process upset, or true contamination signal

For example, a machine-learning model may be trained on years of utility data including rainfall, turbidity, source water conditions, chlorine residual, temperature, customer complaints, and lab-confirmed microbial events. The system may then flag combinations of inputs associated with elevated contamination risk. This can improve situational awareness and operational response.

Limitations and caution points

AI systems are only as reliable as the data used to train and validate them. If the data are incomplete, biased, or unrepresentative, the model may miss important events or generate false alarms. Black-box models can also be difficult to explain, which matters in public health and regulatory contexts. In addition, AI cannot compensate for poor sampling design, bad calibration, or missing laboratory confirmation.

So while ai water quality is a real and growing field, it should be viewed as an enhancement to scientific testing rather than a substitute for it. The strongest systems combine physical sensors, validated laboratory methods, hydrologic knowledge, and transparent analytics.

Emerging contaminants will shape future testing priorities

One reason future water testing is expanding so quickly is that the list of contaminants of concern is evolving. Some contaminants have been monitored for decades, but others are receiving increasing attention because of improved detection methods, new toxicological evidence, or changing environmental pressures.

PFAS and ultra-trace chemical detection

Per- and polyfluoroalkyl substances have become a major driver of analytical innovation. These compounds may occur at extremely low concentrations, yet regulatory and health-based discussions often focus on parts-per-trillion levels. Measuring such low levels requires sophisticated sample handling, contamination control, and advanced laboratory analysis. Future methods aim to improve throughput, reduce cost, expand compound coverage, and support non-target screening for related fluorinated substances.

Lead and corrosion-related metals

Lead remains a priority because exposure can occur through corrosion in plumbing even when treated water leaving the plant meets standards. Future testing is moving toward better point-of-use understanding, smarter corrosion monitoring, and more nuanced sampling strategies that account for stagnation, fixture type, and plumbing materials. Copper, iron, manganese, and zinc also matter as indicators of corrosion, aesthetic problems, or infrastructure deterioration.

Nitrate, agricultural chemicals, and watershed impacts

In rural areas and groundwater-dependent communities, nitrate remains a critical concern. Sensor improvements and spatially targeted sampling may help detect changing groundwater quality sooner. Similar advances apply to pesticides and herbicides, especially where seasonal use and storm runoff create pulses that periodic testing might miss.

Microplastics and particle characterization

Microplastics remain an evolving area scientifically and analytically. A major challenge is standardization: how to collect, identify, quantify, and interpret particles consistently. Future water testing may incorporate better spectroscopy, imaging, and particle analysis workflows, though the health significance of different exposure levels is still being actively studied.

Pathogens, biofilms, and antibiotic resistance markers

Microbial contamination is not limited to traditional indicator bacteria. Future testing increasingly considers opportunistic pathogens, distribution system biofilms, source water microbial ecology, and resistance-associated genes. Molecular methods can offer faster or broader insight, but interpreting health significance requires care. Detection of genetic material does not always prove viable infectious organisms are present, which is why confirmatory and context-based interpretation is essential.

Practical testing methods in the near future: what users can expect

From a user perspective, the future of water testing will not be a single breakthrough device. It will be a layered ecosystem of methods with different strengths. Many of these methods are already available in some form, but they are becoming more integrated, affordable, and easier to deploy.

Laboratory testing will remain the reference standard for many questions

Certified laboratory analysis will continue to be essential for compliance testing and for contaminants that require highly sensitive, validated methods. This includes many metals, volatile organics, PFAS, radiological contaminants, and confirmatory microbiology. The future improvement here is not replacement, but increased automation, faster turnaround, and broader analytical coverage.

Portable field instruments will expand rapid screening

Handheld meters, portable colorimeters, digital photometers, and mobile sensor platforms are becoming more capable. These tools support rapid decisions in homes, schools, clinics, disaster response, and utility field work. A practical guide to water testing methods explained helps show why field screening and laboratory confirmation are complementary rather than competing approaches.

Consumer-oriented testing will become more digital and data-driven

Home users are likely to see more app-connected devices, guided workflows, automatic logging, and interpretation support. A result such as nitrate, hardness, chlorine, or pH will increasingly come with context, trend history, and instructions on when professional testing is recommended.

Remote and autonomous systems will improve resilience

In remote communities, industrial sites, and climate-affected regions, autonomous monitoring stations can reduce the need for constant site visits. Solar power, low-power communications, and ruggedized design make longer deployments possible. This is especially valuable where flooding, wildfire, drought, or access limitations disrupt conventional sampling.

How future results will be interpreted: beyond pass or fail

A common misunderstanding about water testing is that every result can be classified simply as safe or unsafe. In reality, interpretation often depends on the parameter, concentration, exposure duration, population sensitivity, and regulatory framework. Future water testing systems are expected to improve how results are translated for professionals and the public.

Interpretation generally falls into several categories:

- Regulatory exceedance: a value above an enforceable maximum contaminant level, treatment technique requirement, or action threshold

- Operational concern: a change suggesting treatment inefficiency, sensor drift, corrosion, or distribution system instability

- Aesthetic issue: color, taste, odor, hardness, or staining concerns that may not indicate direct toxicity but still affect usability

- Public health signal: a result that warrants further investigation because it may indicate microbial contamination, toxic exposure, or chronic risk

For example, turbidity is often not a health hazard by itself at low levels, but rising turbidity can indicate particles that interfere with disinfection or reflect treatment upsets. Chlorine residual is not interpreted the same way as nitrate. A positive coliform result is not interpreted the same way as elevated sodium. Future systems will increasingly present these distinctions clearly rather than treating all parameters as equivalent.

Data visualization will help. Instead of a spreadsheet of isolated values, users may receive trend charts, anomaly scores, geospatial maps, confidence intervals, and event timelines. That can make results easier to understand without oversimplifying them.

Regulatory standards and thresholds will continue to guide technology adoption

Water technology trends do not develop in a vacuum. Regulation strongly influences which technologies become widely used. In public water systems, testing methods must often align with approved analytical procedures, reporting rules, and contaminant standards. As new contaminants become regulated or subject to health advisories, demand for suitable testing methods rises sharply.

Important regulatory concepts include:

- Maximum contaminant levels for certain chemicals and microorganisms

- Treatment technique requirements where direct measurement is not sufficient

- Action levels for contaminants such as lead

- Monitoring frequency rules based on source type, population served, and previous results

- Method detection limits and quality-control expectations for laboratories

Future water testing technologies must therefore demonstrate not only technical performance but also reproducibility, traceability, and practical fit within compliance systems. Some emerging tools will first be used for screening and operational intelligence before they become accepted for formal regulatory reporting.

The hydrologic context also matters. Source waters differ by region, geology, land use, and climate. The U.S. Geological Survey water resources program provides valuable scientific context for how groundwater and surface water conditions shape water quality. Future testing systems increasingly integrate this environmental context into risk assessment and monitoring design.

Challenges that could slow progress

The promise of future water testing is substantial, but several barriers remain.

Cost and access

Advanced instruments, especially for trace organics or molecular testing, can be expensive to acquire, maintain, and staff. Small utilities and private well owners may struggle to access cutting-edge analysis. Technology adoption must therefore be paired with affordability strategies, regional lab networks, and practical screening pathways.

Standardization

New methods are valuable only if results are consistent and comparable. Calibration protocols, sample handling rules, interference testing, validation studies, and inter-laboratory comparisons are essential. This is particularly important for emerging contaminants and novel biosensors.

Data overload

Continuous monitoring can generate enormous amounts of data. Without strong dashboards, analytics, and response plans, more data can create confusion rather than clarity. Utilities need systems that distinguish meaningful signals from background variation.

Cybersecurity and data governance

As smart water sensors and cloud-connected platforms expand, cybersecurity becomes part of water safety. Data integrity, access controls, and secure communications matter because false readings or disrupted monitoring could affect public trust and operational response.

False confidence

A polished digital dashboard can create the impression that every risk is being tracked continuously. In reality, many important contaminants still require targeted sampling and laboratory analysis. Future systems must communicate coverage and limitations honestly.

What future water testing means for homes, buildings, and private wells

The future of water testing is not only for large utilities. It will also affect households, schools, apartments, healthcare settings, and private wells. In buildings, premise plumbing conditions can strongly shape water quality at the tap. Stagnation, hot water systems, aging fixtures, corrosion, and low disinfectant residual can all create localized issues that central utility data may not fully reflect.

For homeowners, the most useful changes are likely to include clearer screening tools, easier digital interpretation, and more targeted follow-up recommendations. Rather than telling users only that a reading is high or low, future platforms may suggest when to flush plumbing, repeat sampling after stagnation, test for lead, evaluate treatment devices, or send a sample to a certified laboratory.

Private well owners stand to benefit significantly from improved future water testing. Wells are not regulated in the same way as public water systems, so owners are responsible for testing and maintenance. Smart sensors and simplified digital workflows may help detect changes faster, especially for nitrate, conductivity, pH, and other well-relevant indicators. But periodic comprehensive lab testing will still be important, particularly after flooding, nearby land-use changes, or noticeable changes in taste, odor, or clarity.

For those comparing practical home options, well-designed guides to the best water testing kits can help users distinguish between basic screening products and tests that warrant professional confirmation. The key is matching the tool to the question being asked.

The connection between testing advances and water treatment decisions

Testing has value because it informs action. One of the most important aspects of future water testing is the tighter link between detection and treatment response. As data become more immediate and granular, treatment decisions can become more targeted.

Examples include:

- Adjusting corrosion control when indicators suggest increased lead or copper release risk

- Optimizing disinfection when source water quality shifts after storms

- Targeting filter replacement or membrane maintenance based on actual performance data

- Selecting household treatment systems based on verified contaminants rather than guesswork

This is why testing and treatment should be considered together. A scientific overview of water treatment system selection for safe drinking water is useful because future testing will increasingly guide more precise treatment choices, whether at municipal scale or point of use.

What the next decade may look like

Over the next decade, several developments are likely to define future water testing.

More continuous monitoring in distribution systems

Utilities will likely deploy denser networks of smart water sensors to monitor disinfectant residual, pressure, water age indicators, and intrusion-related anomalies. These systems may not directly measure every contaminant, but they can improve early warning capability and sampling efficiency.

Better integration of field data and lab confirmation

Data platforms will increasingly merge online sensor streams, field screening results, laboratory reports, maintenance logs, and source-water conditions into a single decision environment.

Expansion of targeted molecular testing

Rapid molecular assays for microbial risks may become more common in high-priority settings such as hospitals, large buildings, and systems vulnerable to biofilm-associated pathogens.

Improved low-cost testing for underserved settings

One of the most important goals in water tech innovation is not just higher sophistication, but broader access. Lower-cost, robust, low-maintenance testing tools could significantly improve drinking water surveillance in smaller communities and resource-limited settings.

Greater use of predictive models

Instead of waiting for contamination to occur, systems will increasingly estimate where and when problems are most likely. This proactive model may improve resilience under climate stress, infrastructure aging, and shifting source-water conditions.

FAQ

What is meant by future water testing?

Future water testing refers to the next generation of methods and technologies used to assess water quality, including smart water sensors, advanced laboratory analysis, molecular microbiology, digital platforms, and AI-assisted interpretation.

Will smart water sensors replace laboratory testing?

No. Smart water sensors are valuable for continuous monitoring and rapid alerts, but many contaminants still require certified laboratory analysis for accurate identification and regulatory compliance.

How does AI help with water quality testing?

AI helps analyze large datasets, detect unusual patterns, prioritize alarms, and predict risk conditions. It supports decision-making but does not replace validated analytical measurements.

Are future water testing tools useful for homeowners?

Yes, especially for screening and trend tracking. Digital home devices can make results easier to read and store, but important health-related concerns such as lead, nitrate, or microbial contamination may still require laboratory confirmation.

Which contaminants are likely to drive innovation most strongly?

PFAS, lead, nitrate, microbial hazards, corrosion-related metals, and other emerging contaminants are major drivers of water testing evolution because they require improved sensitivity, speed, or interpretation.

Conclusion

The future of water testing is not defined by a single machine or method. It is defined by integration: smarter sensors, stronger laboratory science, faster data handling, better interpretation, and more direct links between detection and response. These advances matter because drinking water risks are dynamic, sometimes subtle, and often time-sensitive. As water technology trends continue to develop, the most effective systems will be those that combine scientific rigor with practical usability. For professionals and the public alike, the goal remains the same: to identify real risks accurately, respond early, and protect drinking water quality with evidence rather than assumptions.

Read the full guide: Water Testing Guide

Explore more in this category: Water Testing Articles